Day 15 : All about streams

- Day 1 - The Beginning

- Day 2 - File System in node.js

- Day 3 - Regular expressions in node.js

- Day 4 - Console module in node.js

- Day 5 - All about errors

- Day 6 - Array methods

- Day 7 - All about NPM

- Day 8 - Publishing package on NPM

- Day 9 - Crypto Module ( Hashing and HMAC)

- Day 10 - Crypto Module ( Encryption and Decryption )

- Day 11 - Express Framework

- Day 12 - CRUD in MongoDB using node.js

- Day 13 - Sign Up form in node.js

- Day 14 - Introduction to socket.io

- Day 15 - All about streams

- Day 16 - Zlib Module

- Day 17 - CRUD in MySQL using node.js

- Day 18 - Concepts of callbacks in node.js

- Day 19 - Query String in node.js

- Day 20 - Timers in node.js

- Day 21 - Buffers in node.js

- Day 22 - String Decoder Module in node.js

- Day 23 - Debugger module in node.js

- Day 24 - Child Processes in node.js

- Day 25 - Clusters in node.js

- Day 26 - OS module in node.js

- Day 27 - Assert module in node.js

- Day 28 - Getting Tweets using node.js

- Day 29 - Uploading file to dropbox using node.js

- Day 30 - Github API with node.js

- Streams are used to handle streaming data in node.js

- Streams can be readable, writable or both.

- All streams are instances of

eventEmitterclass. - We can use the

streammodule via requiring it in the following way :

var stream = require('stream');

- Readable stream : The streams which is used to perform read operations are readable streams.

- Writable stream : The streams which is used to perform write operations are writable streams.

- Duplex stream : Duplex streams are the streams which implements both readable and writable stream.

- Transform stream : Transform streams are duplex streams that can transform or modify data as it is read and written. Also, In transform stream output is in some way related to the input.

The streams which is used to perform read operations are readable streams.All aspects of readable streams are explained below :

-

Modes: These are the two modes of readables

-

paused :

- If the readable is in paused mode, then we need to call

stream.read()explicitly to read the chunks of data. - By default, all readable streams are in paused mode.

- We can switch readable to pause mode by calling stream.pause() method when there are no pipe destinations

- We can also call stream.unpipe() method when pipe destinations are available , in order to switch readable to pause mode.

- If the readable is in paused mode, then we need to call

-

flowing :

- If the readable is in flowing mode, then the data is successfully emitted.

- We can switch the readable stream to flowing mode by calling stream.resume() method.

- We can switch the readable stream to flowing mode by calling stream.pipe() method.

- If the readable is in flowing mode and there is no consumer to handle the data then it can lead to data loss.

-

paused :

-

Examples: Examples of methods or modules which uses readable streams directly or in the form of duplex/transform stream are as follows :

- HTTP requests ( Server )

- HTTP responses ( Client )

- fs module read streams

- zlib module

- crypto module

- TCP sockets

- process.stdin

-

Events :

- readable : This event is fired when there is data available to be read from the stream.

- data : This event is fired when the stream is vacating the ownership of the chunk of data to the consumer.

- error : This event is fired when the stream is unable to generate data due to some internal error or when stream tries to push invalid chunk of data.

- close : This event is fired when the stream is closed. It indicates that no more events will be emitted and no further computation will occur.

- end : This event is fired when all the data is read. It indicates that there is no more data to be consumed.

-

Methods :

-

readable.pause(): This method is used to change the mode of the stream fromflowingtopausedand also all the data availble keeps residing in the internal buffer. -

readable.resume(): This method is used to change the mode of the stream frompausedtoflowingand also stream will resume emitting events. -

readable.isPaused(): This method is used to check the current operating state of the readable stream. If it returnstruethen that signifies that readable stream is in paused mode. -

readable.pipe(): This method is used to attach a writable stream to the readable which will make the stream switch to flowing mode and start pushing data to the attached writable. -

readable.unpipe(): This method is used to detach the writable stream previously attached to the readable stream. -

readable.read(): This method is used to pull the data out of the internal buffer where data is returned in the form of buffers unless any other format is specified usingreadable.setEncoding(). If there is no data to pull , then null is returned. -

readable.setEncoding(): This method is used to set the encoding for readable stream. By default the data is pulled in the form of buffers. -

readable.unshift(): This method is used to push the data back to the internal buffer. -

readable.wrap(): This method is used to read the data from the readables where the data sources uses the old streams. -

readable.destroy(): This method is used to signifies the end of readable stream and stream releases any resources , if held.

-

The streams which is used to perform write operations are writable streams. All aspects of writable streams are explained below :

-

Examples: Examples of methods or modules which uses writable streams directly or in the form of duplex/transform stream are as follows :

- HTTP requests ( Client )

- HTTP responses ( Server )

- fs module write streams

- zlib module

- crypto module

- TCP sockets

- process.stdout

- process.stderr

-

Events :

-

drain : This event is fired when a call to

system.write(chunk)method returns false and it indicates when it will be appropriate to resume writing data. -

pipe : This event is fired when

stream.pipe()method is called on a readable stream indicating the addition of the writable in the set of destinations of the readable. -

unpipe : This event is fired when

stream.unpipe()method is called on a readable stream indicating the removal of the writable from the set of destinations of the readable. - error : This event is fired when an error occured while writing or piping the data.

- close : This event is fired when the stream is closed. It indicates that no more events will be emitted and no further computation will occur.

- finish : This event is fired when all the data is successfully flushed.

-

drain : This event is fired when a call to

-

Methods :

-

writable.cork(): This method is used to force all the written data to be buffered in memory. This buffered data is flushed in either of the following scenarios :-

stream.uncork()method is called. -

stream.end()method is called.

-

-

writable.uncork(): This method is used to flush all the data buffered bystream.cork()method. -

writable.write(): This method is used to write some data to the stream and call the given callback when the data is handled successfully. -

writable.setDefaultEncoding(): This method is used to set the default encoding for the writable stream. -

writable.end(): This method is used to signifies that no more data will be written to the writable stream. -

writable.destroy(): This method is used to signifies the end of writable stream.

-

Duplex streams are the streams which implements both readable and writable streams simultaneously.Most common example of

duplex stream include net.socket class of net module.

A better explanation of how duplex streams works is as follows :

Suppose we build a socket in node.js to implement the functionality of transmit and receive data

simulataneously, then that can be achieved using duplex stream. We will be having two independent channels in the network where one channel is used

for transmitting data and other for receiving data.

-

Examples: Examples of methods or modules which uses duplex streams are as follows :

- Sockets (TCP) : It uses

duplexstreams for implementing sockets. - zlib : It uses

duplexstreams for gzip compression and decompression. - crypto : It used

duplexstream for performing encryption, decryption and creating message digests.

- Sockets (TCP) : It uses

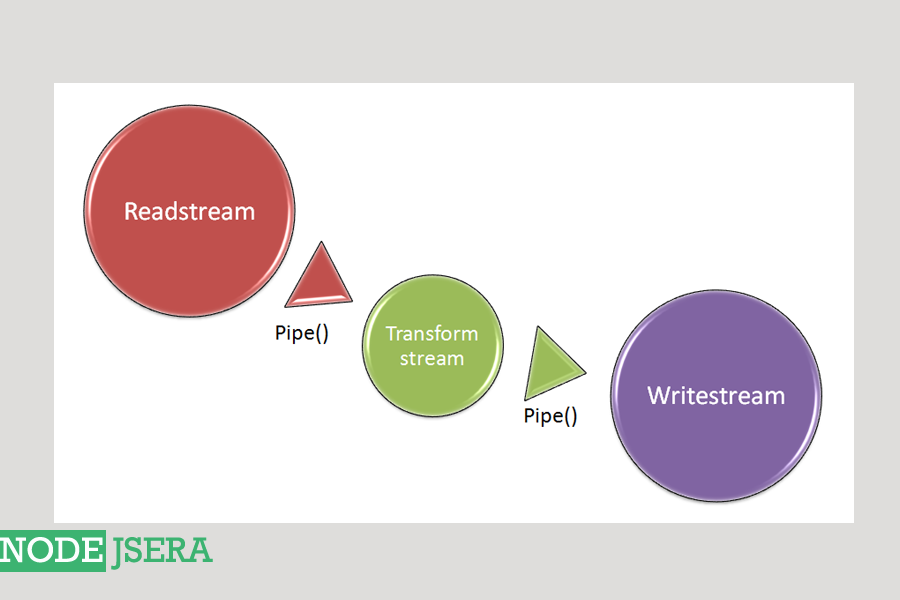

Transform streams are duplex streams that can transform or modify data as it is read and written. Also where output is in some way related to the input.

These streams read the input data , transform

it using the manipulating function and output the new data as shown below :

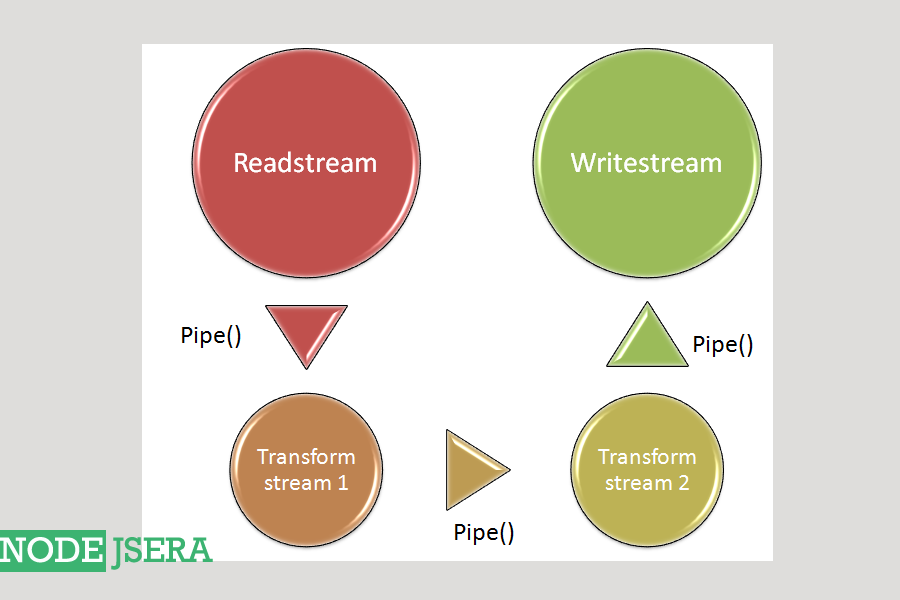

We can also chain streams together to create complex processes by piping one to next as shown below :

-

Examples: Examples of methods or modules which uses transform streams are as follows :

- zlib : It uses

transformstreams for gzip compression and decompression like inzlib.createDeflate()method. - crypto : It used

transformstream for performing encryption, decryption and creating message digests.

- zlib : It uses

-

Methods :

-

transform.destroy(): This method is used to destroy the stream and emiterror. Moreover , Thetranformstream would release all internal resouces being used after this method call.

-

code-snippet about how we can use streams in our code.

// require fs module for file system

var fs = require('fs');

// write data to a file using writeable stream

var wdata = "I am working with streams for the first time";

var myWriteStream = fs.createWriteStream('aboutMe.txt');

// write data

myWriteStream.write(wdata);

// done writing

myWriteStream.end();

// write handler for error event

myWriteStream.on('error', function(err){

console.log(err);

});

myWriteStream.on('finish', function() {

console.log("data written successfully using streams.");

console.log("Now trying to read the same file using read streams ");

var myReadStream = fs.createReadStream('aboutMe.txt');

// add handlers for our read stream

var rContents = '' // to hold the read contents;

myReadStream.on('data', function(chunk) {

rContents += chunk;

});

myReadStream.on('error', function(err){

console.log(err);

});

myReadStream.on('end',function(){

console.log('read: ' + rContents);

});

console.log('performed write and read using streams');

});

We can run it using the following commands :

> node filename_streams.js

In this chapter of 30 days of node tutorial series, we learned about the basics of streams, what are they, types of streams, readable stream, writable stream , duplex stream , transform stream and lastly a coding example to explain how we can use streams in our code.